AI-Powered Content Recommendations for the Engineering Community

The upshot

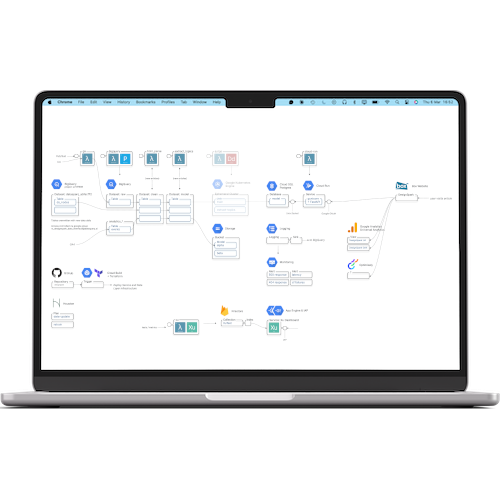

DesignSpark is a massive community hub for engineers, but with thousands of articles, users struggled to find the right technical content. Datasparq built a real-time recommendation engine and a semantic "metadata factory" on Google Cloud to increase community engagement and drive product discovery.

The opportunity

DesignSpark is a content-rich site, but high volume often leads to "search fatigue." RS Group needed a way to help users discover new content effectively, which in turn drives users to learn, interact, and ultimately purchase components from the main RS site.